Basel IV: Canada

Early out of the gate, Switzerland’s move emphasizes the imminence of Basel IV adoption across the globe.

What’s changed?

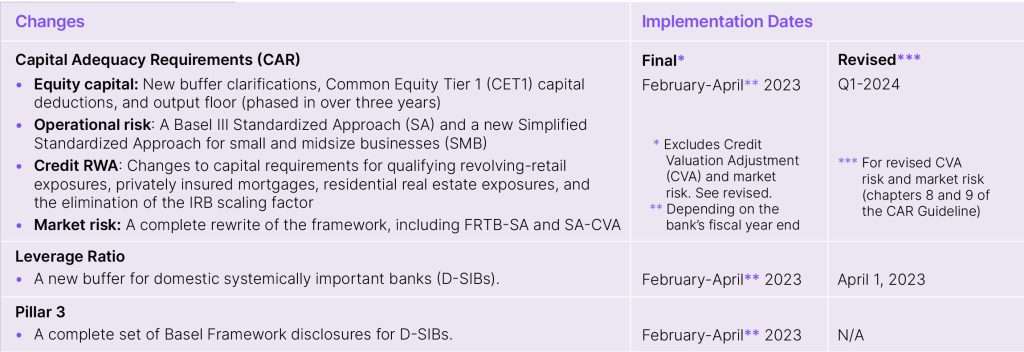

For Canada, one of the first in the world to go live with the so-called Basel IV, revisions incorporate the final round of internationally agreed-upon Basel III reforms into the Office of the Superintendent of Financial Institutions’ (OSFI) capital, leverage, liquidity, and related disclosure guidelines for deposit-taking institutions (DTIs). The reforms are a starting point for modifications that account for unique characteristics of the Canadian market, i.e., OSFI-regulated banks, especially internal ratings-based (IRB) banks, large retail banks, and capital markets businesses.

The final regulatory reporting disclosure, which has more than doubled since the previous version, contains more than 30,000 validation rules. There are structural changes to the risk-shifting logic within the report, yielding a significant rewrite of the reporting logic, in addition to changes in the underlying calculations, capital treatment eligibility, and operational risk and credit risk-weighted assets (RWA) calculations. There are also significant changes to the calculation rules for qualifying retail exposures, securities financing transactions (SFT) netting, loss-given default (LGD) calculation methodology, allocation rules for private mortgage insurance, etc.

Why is this important?

These go-live dates represent more than just the implementation of a range of new and complex calculations, new templates, in addition to increased granularity requirements and volumes of disclosures. Because they impact institutions end-to-end – something regulators and supervisors are keenly following – they also represent a strategic opportunity for banks implementing new global rules if they can get their response to the technical and functional challenges right.

- More risk-sensitive calculations means that institutions need better controls to deal with upstream data lakes/feeds bringing in the new data elements. For banks using the Advanced Approach, the mechanics of individual calculation modules has increased in complexity requiring them to now also/instead use the Standardized Approach. In turn, these new, complex calculations must be validated, requiring greater transparency into the process.

- The introduction of an output floor creates the need to efficiently re-run the entire dataset under the Standardized Approach along with higher volume runs that can impact the organizations optimization strategy after, if the system is not able to adequately scale.

- Increased disclosure exacerbates performance issues brought about by the need to re-run datasets, particularly when issues are only detectable at the reporting level. Increased disclosure granularity can also aggravate performance hampering visibility into and traceability of the results. Overlapping or interconnected disclosures must also be reconciled.

For Canada, one of the first countries to go live, the world is watching.

What’s next?

For firms seeking to use their own models, there are stricter requirements regarding the quality of the data and calculations. This means the capital calculations themselves must be changed to incorporate more risk factors and various liquidity horizons. The need to address these issues warrants a flexible operational risk management ecosystem and scenario-building capabilities that:

- Scale for both massive data volumes, granularity, and number of users.

- Cover both banking and trading books with a strategic approach for both credit and market risk.

- Provide the data governance and control for data lineage and transparency.

- Support the use of a single dataset for optimization, stress testing, and what-if analysis.

Even more, the situation calls for a global solution, i.e., one that closely hews to the BCBS’ standard Basel IV rules but is flexible enough to meet jurisdictional variations with minimal adjustments.